As a video game developer and gaming writer, I have had the opportunity to witness first-hand the incredible evolution of gaming graphics over the years. From the early days of pixelated characters and simple textures to the highly realistic, immersive worlds of today, the advancements in technology have truly transformed the gaming industry.

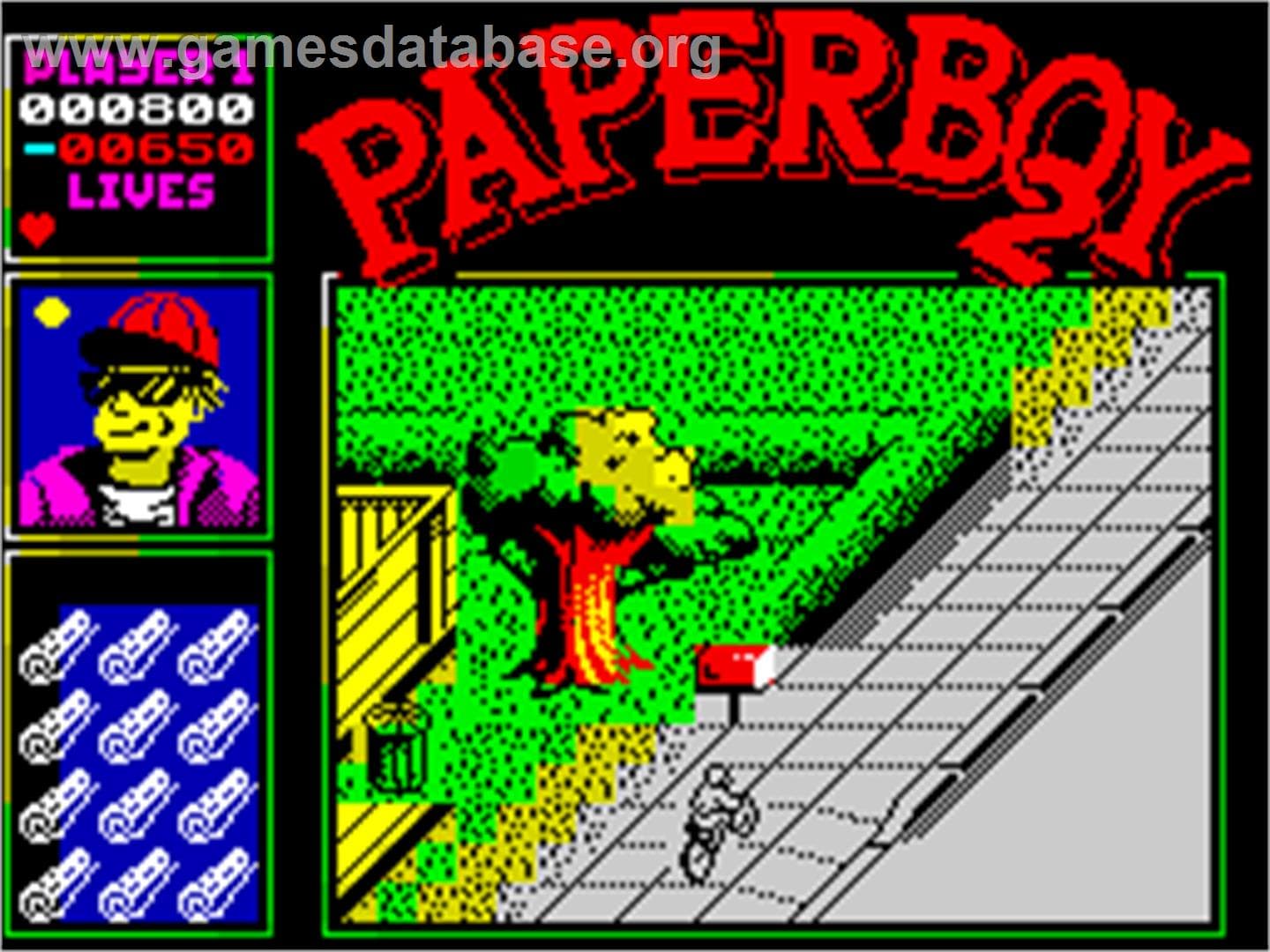

In the early days of gaming, graphics were limited by the technology available at the time. Pixels were large and blocky, and textures were simple and often repeated. The ZX Spectrum for example, released in 1982, was a popular 8-bit home computer that featured a unique graphics system. The machine used a custom-designed video display processor (VDP) called the ULA (Uncommitted Logic Array) to generate its graphics.

The ULA was capable of displaying a maximum resolution of 256×192 pixels in 15 colors, although in practice, many games used lower resolutions to achieve better performance. The ULA could display two colors per 8×8 pixel block, and each block could be assigned its own color attributes, allowing for some basic graphical effects like shading and color gradients.

One limitation of the ULA was that it could only display a limited number of colors on screen at any given time, meaning that many games had to resort to color clash – a visual artifact where different colors would bleed into each other – to achieve more complex graphics. Despite this limitation, the ZX Spectrum was capable of producing some impressive visuals for its time, and its unique graphical style is still fondly remembered by retro gamers today.

As home computers got bigger and better, so did the number of colour and the resolution. However, as technology progressed, so did the capabilities of gaming graphics. The introduction of 3D graphics in the 1990s allowed for more realistic characters and environments, and the development of more powerful hardware allowed for even greater detail and realism.

The Nintendo 64, released in 1996, was a groundbreaking console that was one of the first to feature fully realized 3D graphics. The console used a custom-designed graphics processing unit (GPU) to render its 3D graphics.

The N64’s GPU was capable of rendering polygons, which are the building blocks of 3D graphics. The console could display up to 80,000 polygons per second, which was a significant improvement over previous consoles. The N64’s GPU also had the ability to apply texture mapping to polygons, which added detail and realism to the game’s environments and characters.

One of the most impressive features of the N64’s graphics system was its ability to smoothly render 3D environments without any slowdown or graphical glitches. The console achieved this by using a technique called z-buffering, which ensured that objects were rendered in the correct order, so there were no visual glitches or clipping issues.

The N64’s graphics system also supported advanced lighting effects, such as dynamic lighting, which allowed for realistic lighting effects that could change based on the game’s environment and time of day. Additionally, the console featured a built-in anti-aliasing filter that helped to smooth out jagged edges and make the graphics appear more polished.

The Nintendo 64’s 3D graphics system was a major breakthrough for its time and laid the foundation for the 3D graphics going forward.

With the advent of the 21st century, gaming graphics reached new heights with the introduction of high-definition (HD) graphics and the use of advanced techniques such as motion capture, real-time rendering, and physics-based simulations. These advancements allowed for games to feature hyper-realistic characters and environments, as well as more dynamic and engaging gameplay.

Recent years have seen the introduction of 4K and 8K resolution, ray tracing and other real-time rendering techniques like physically-based rendering, which enhance the realism of the games, the lighting, and the shadows. This makes the game world more believable and closer to the real world. This is also coupled with the use of VR technology which makes the gaming experience more immersive than ever before.

One of the earliest video games to use ray tracing, real-time rendering, and enhanced lighting features was Quake II, released by id Software in 1997.

Quake II was a first-person shooter game that used ray tracing technology to create more realistic lighting and shadow effects. The game’s engine, known as the id Tech 2 engine, was capable of rendering real-time dynamic lighting and shadowing, which created a more immersive and realistic gameplay experience.

The game’s engine also supported advanced features such as colored lighting, which allowed for more complex and dynamic lighting effects. The use of these advanced lighting features was a significant leap forward for 3D gaming at the time, as they helped to create a more realistic and immersive gameplay experience.

It was also one of the first games to use hardware acceleration to achieve real-time rendering, which allowed for smoother gameplay and faster graphics performance. This made it possible for the game to run at higher resolutions and with more complex graphics than previous games.

Quake II was a major technological breakthrough for its time, and it paved the way for many of the advanced graphics technologies used in modern games.

As technology continues to advance and Gaming PCs get more powerful, we can expect to see even more incredible advancements in gaming graphics in the future. From virtual and augmented reality to photorealistic graphics, the possibilities are endless. As developers, we must continue to push the boundaries of what is possible, and strive to create the most immersive and realistic gaming experiences for players.

The evolution of gaming graphics has been an exciting journey, one that has brought us from simple, pixelated graphics to highly realistic and immersive worlds. As technology continues to evolve, we can look forward to even more incredible advancements in the future, and the opportunity to create truly breathtaking gaming experiences for players.

You must be logged in to post a comment Login